Thoughts on Static Code Analysis

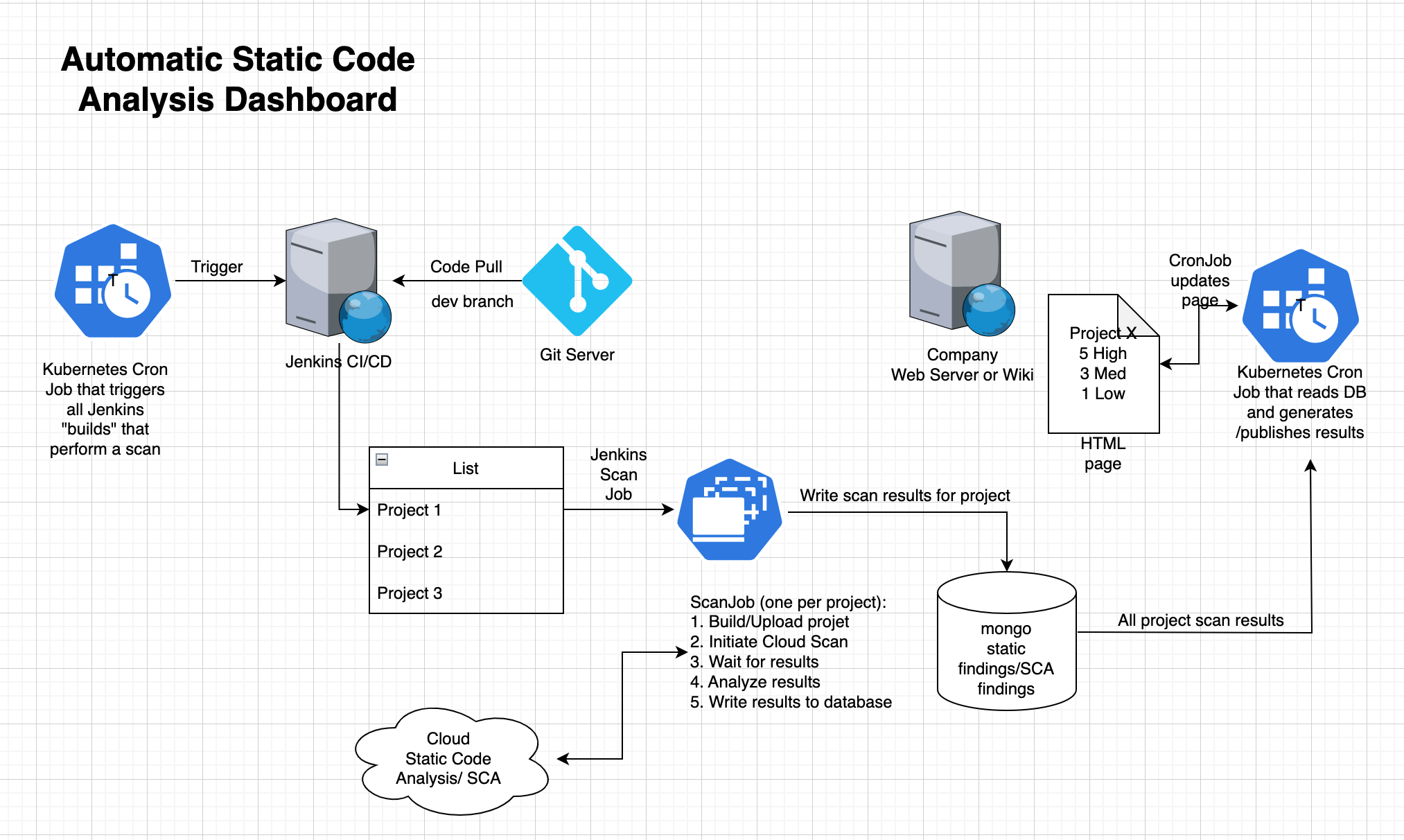

This is a first of a series of articles aiming to show how to integrate a cloud-based Static Code Analysis tool into a CI/CD build pipeline. The result gives users a dashboard based on automatic software scans.

One can find Cybersecurity issues and breaches everywhere these days. One thing professional software development teams can do is perform static code analysis. The developers take a project’s source code, or binary and send it to an automated tool. This tool is then performing an analysis of the source code or binaries. This analysis does a test to see if portions of the code bring in undue cybersecurity risk. The programs typically will look for a host of potential issues, analyze them, score them and provide an actionable report on how to fix things.

Another area of risk is the use of third-party libraries. Regardless of what programming language one uses, nearly all projects rely on libraries. Libraries help prevent the re-coding of already-solved problems. All programmers stand on the shoulders of other programmers that came before them. Using a library, however, can bring cybersecurity risks to your product. The recent flaws discovered in OpenSSL 3.0.6 or the log4j bug are just two examples of defects which were recently found in libraries. Software Composition tools allow you to scan your dependencies, and identify the versions of the libraries you use. The tool then matches those library versions against known defects. Software composition analysis tools will often also generate a so-called software bill of materials. This is a list of the software that you produce plus the sum of all primary dependencies, and dependencies of those dependencies.

When a request to bring in Code Analysis, Software Composition Analysis, or Metrics to a project, the team often responds with ‘Oh, do we need to do this, we are already late for shipping feature XYZ. The development team just views this as added work. The team must do the work of the scanning, but also the fallout of the scanning. The team will need to remediate the problem code identified after the scan.

Then there is the problem of doing this continually. The team can scan the application version 1.0, and fix all High and Medium rated issues before the release. But then, there is the brand version 2.0, the team will need then need to repeat the process.

From a Senior Management point of view, it is good business to have teams mitigate cybersecurity issues if they can be found before the release of the product. It only takes one breach to have a severe breach of your reputation. Lawsuits and other costs may then follow.

The sales team is happy to tell customers that the company has Static Code Analysis as part of its development process. Customers will be happy to know that the development team is performing Software Composition Analysis to thwart cybersecurity issues. To compete in certain markets you may to show that your product has a reduced cybersecurity risk.

Customers like a better, more secure product. Recall that the customer may have to meet regulatory requirements or requirements from their own internal IT organization.

But I am more interested in Static Code Analysis / Software Composition Analysis from a Software Team/Lead / DevOps / Management point of view. I think doing both analyses makes sense. Fixing found issues also makes sense. We do however need to balance the fixing of potential cybersecurity issues with Product Management and the customer’s need to have new features and fixed defects. Here planning and high-level stakeholder agreements matter.

As a team lead, I would like all my projects scanned automatically. I would like to have an automatically generated table, showing the project, #High, #Medium and #Low defects. If a developer works 2 weeks on fixing cybersecurity issues, those numbers should visibly come down. Having a per-project history of this data, and possibly a graph would be nice. And having false positives eliminated (all tools will have some false positives) from these reports is also essential. I would like to have a web or wiki page like the one shown below. Veracode is one of many vendors in this space — a vendor that my company is partnering with.

What is important here is that this page should be updated automatically every single day, and provide information that matches the automatically initiated scans done for each project. This way, I as a project lead can get an idea of where my project stands in terms of static code analysis. Software composition analysis is related, but an orthogonal problem – issues discovered will have a completely different and riskier remediation path. This set of blog posts will focus on static code analysis, and provide a web-based dashboard that shows the current status of static code analysis findings.

Follow-up posts will come up with a design for a CI/CD system to automatically trigger builds, perform the scans, download the scan reports, analyze the reports and update the status web page. The follow-up posts are more technical – click the link below for the next post.

Part 2 — High-Level Architecture